The Central Thesis

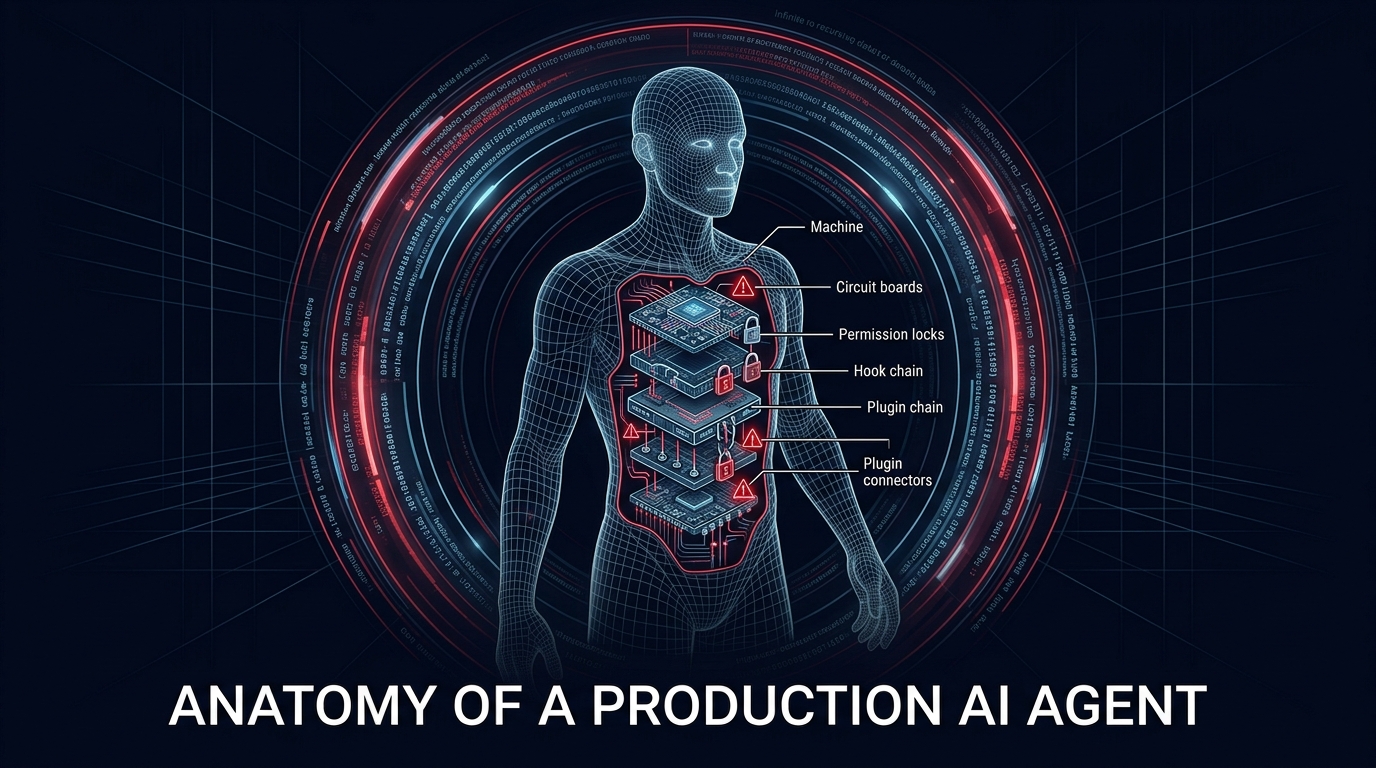

AI agents are not chatbots with tool access. They are autonomous execution runtimes with operating system-class security requirements -- and we are deploying them without the constraints that made operating systems safe.

"The model is not thinking. It is issuing instructions inside a control loop."

"The attack surface is not the model. It is the extension ecosystem."

"We recreated kernel security architecture -- without the constraints that make it safe."

"The agents are already running. And you cannot see them."