Table of Contents

- Introduction

- Part I: Risk Framework

- Part II: Documentation & Assessment

- Part III: Implementation

- Conclusion: The Path Forward

Understanding the World's First Comprehensive AI Regulation

When the European Union's AI Act became law on August 2, 2024, it created the world's first comprehensive legal framework for artificial intelligence. This isn't just another compliance checkbox. The regulation fundamentally changes how you develop, deploy, and monitor AI systems in the European market — with penalties reaching €35 million or 7% of global annual turnover for violations.

The prohibited practices became enforceable on February 2, 2025. High-risk system requirements take full effect August 2, 2026. Safety components of regulated products face an extended deadline of August 2, 2027. Organizations that started early will find 40–50% of requirements already addressed through existing responsible AI practices. Those starting now face a compressed timeline requiring immediate action.

Critical Reality Check: Approximately 5–18% of AI systems will likely fall under high-risk classification. Compliance costs range from €29,000 to €52,000 annually per system, with initial setup costs spanning €193,000 to €15 million depending on organization size. These aren't estimates for planning purposes — they're actual costs organizations are already incurring.

The AI Act doesn't operate in isolation. It intersects with GDPR, medical device regulations, financial services rules, and emerging global AI governance standards. Unlike sector-specific regulations, this framework applies horizontally across all industries, creating unified requirements that reshape AI governance worldwide. The "Brussels Effect" is already happening — prepare for the EU AI Act and you're effectively preparing for the future of global AI governance.

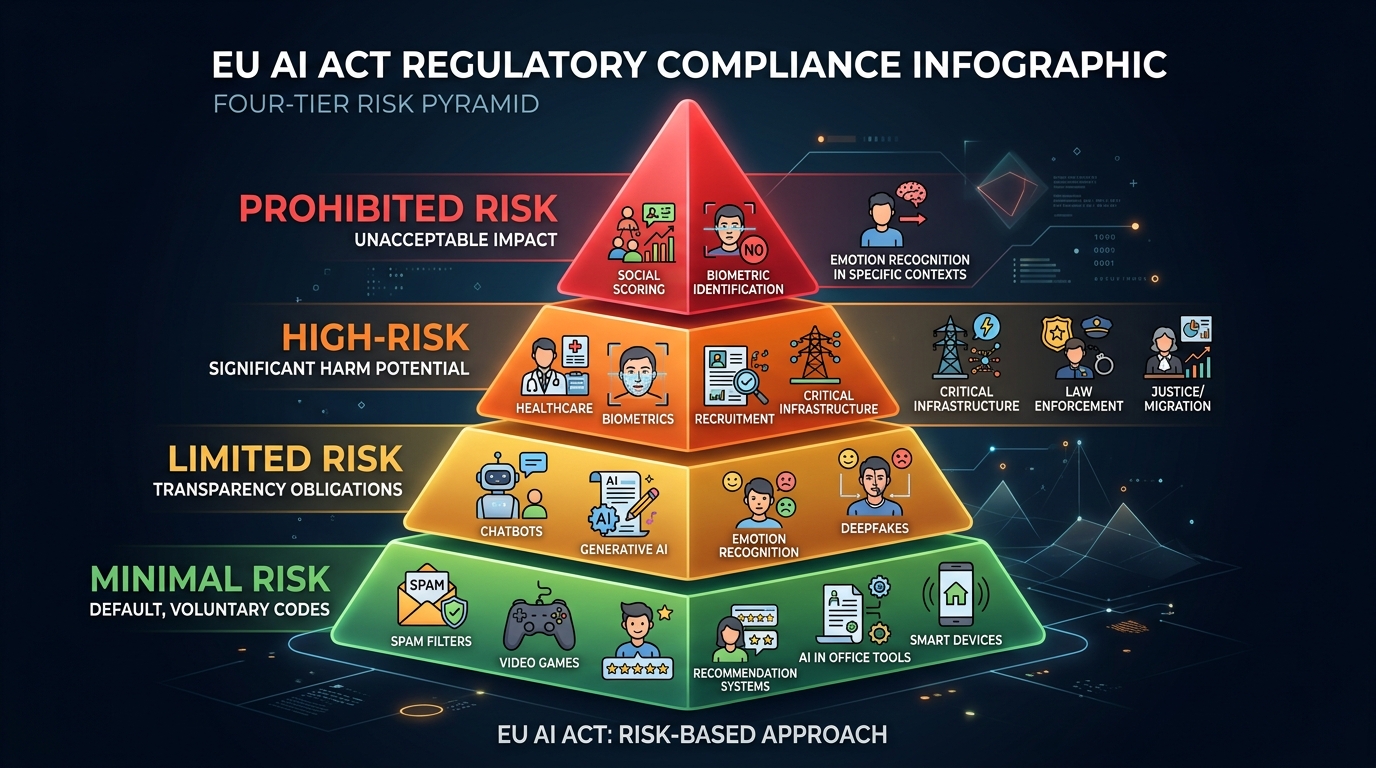

The Four-Tier Risk Framework Determines Your Obligations

The EU AI Act establishes a pyramid-shaped regulatory structure where requirements intensify with risk level. Understanding where your AI systems fall within this framework is the critical first step toward compliance. This isn't about theoretical classification — your placement determines whether you face absolute prohibitions, comprehensive documentation requirements, transparency obligations, or voluntary measures.

Unacceptable Risk: Absolute Prohibitions Now in Force

Eight AI practices are completely banned under Article 5, with enforcement beginning February 2, 2025. These prohibitions aren't subject to risk mitigation or exemptions. You cannot compliance your way around them — if your system falls into these categories, you must decommission it immediately.

Penalty for violations: €35 million or 7% of global annual turnover (whichever is higher). For large organizations, regulators will choose the larger amount. These aren't theoretical maximums — the penalty structure signals serious enforcement intent.

Subliminal manipulation targets AI deploying techniques beyond conscious awareness to distort behavior causing significant harm. Think dark patterns and neuro-marketing tools that bypass rational defense mechanisms. Standard advertising likely falls outside this prohibition, but systems designed to exploit addiction in gambling or gaming, or manipulate voting behavior, cross the line. The threshold requires "significant harm," which acts as a filter, but don't rely on gray areas. Review your user experience algorithms and recommender systems for manipulative intent.

Vulnerability exploitation prohibits systems targeting age, disability, or socioeconomic circumstances to manipulate behavior causing harm. A voice-activated toy encouraging dangerous play violates this rule. So does a predatory loan algorithm targeting financially distressed individuals with confusing terms. The inclusion of "social or economic situation" broadens scope significantly, potentially impacting financial products targeting low-income demographics.

Social scoring by public authorities — evaluating individuals based on social behavior leading to detrimental treatment in unrelated contexts — is absolutely banned. This effectively prohibits social credit systems where behavior in one domain (traffic fines) automatically impacts another (healthcare access or housing eligibility). The prohibition applies to both direct government use and systems deployed on their behalf.

Predictive policing via profiling is banned when assessing criminal risk solely from personality traits or profiling characteristics. The key word is "solely" — human-assisted assessment incorporating objective facts remains permitted. Law enforcement can use AI to analyze crime patterns, but cannot deploy systems that automatically flag individuals as high-risk based purely on demographic or behavioral profiles without human evaluation.

Untargeted facial scraping directly targets business models built on scraping social media to build massive identity databases. The "Clearview AI clause" bans building facial recognition databases through untargeted scraping from internet or CCTV footage. Developers must audit training data sources (LAION, Common Crawl) to ensure they don't contain scraped biometric data. This prohibition hit immediately — if your training data includes scraped faces, retrain on compliant data and delete the scraped dataset.

Workplace and education emotion recognition is prohibited due to scientific immaturity and power imbalances in these settings. Tools analyzing video interviews for "enthusiasm" or "honesty" violate this ban. Systems monitoring student engagement through emotion detection are prohibited. The exemption for medical or safety purposes (driver fatigue monitoring in logistics) exists but remains narrowly defined.

Sensitive biometric categorization bans deducing race, religion, political views, sexual orientation, or union membership from biometric data. Corporate "diversity analytics" tools attempting to infer workforce racial composition from video data are illegal. Law enforcement has limited exceptions under strict conditions, but these don't extend to private sector applications.

Real-time public biometric identification prohibits live facial recognition in publicly accessible spaces for law enforcement. Narrow exceptions exist for searching missing persons, preventing imminent threats to life or terrorism, and locating serious crime suspects listed in Annex II — but require prior judicial or independent administrative authorization. The default position is prohibition; exceptions require documented justification and oversight.

High-Risk AI: Comprehensive Obligations Required

High-risk classification triggers through two distinct pathways, each with different timelines and requirements. Misclassification creates operational risk — over-classification wastes resources (€16,000–€23,000 per system for unnecessary conformity assessments), while under-classification invites enforcement action with €15 million or 3% global turnover penalties.

Pathway 1: Safety components of regulated products (Annex I). AI systems that are safety components of products covered by existing EU harmonization legislation — medical devices, vehicles, machinery, aviation, toys, lifts — requiring third-party conformity assessment fall into high-risk. These systems face an extended compliance deadline of August 2, 2027, recognizing the complexity of integrating AI Act requirements with existing sectoral regulations like the Medical Device Regulation (MDR) or automotive safety standards.

Pathway 2: Annex III use cases specify eight domains where AI applications are presumptively high-risk due to fundamental rights impact. Compliance deadline: August 2, 2026.

- Biometrics: Remote biometric identification systems (non-real-time), biometric categorization by non-sensitive attributes, and emotion recognition outside prohibited areas. Post-event facial recognition for serious crime investigation requires full compliance.

- Critical infrastructure: Safety components in road traffic, water, gas, heating, and electricity supply. AI systems managing traffic signals, controlling power grid distribution, or monitoring water treatment facilities face high-risk requirements.

- Education and vocational training: Systems determining access to educational institutions, assigning students to programs, or evaluating learning outcomes. Automated admission decisions, AI-powered grading systems that influence final grades, exam proctoring with automated cheating detection, and skills assessment tools determining certification all require full compliance.

- Employment and workers management: Recruitment tools (CV filtering, candidate ranking), promotion decisions, performance monitoring, and task allocation systems. Approximately 50% of large enterprises already use AI in hiring, making this one of the most impactful categories.

- Essential services: Public benefits eligibility, credit scoring (except fraud detection), insurance risk assessment for life and health insurance, and emergency call triage. Credit scoring is explicitly classified as high-risk under Annex III.5.b.

- Law enforcement: Evidence evaluation, recidivism risk assessment, profiling during investigations (for permitted purposes), and polygraph/deepfake detection.

- Migration, asylum, and border control: Polygraph systems, travel document verification, and risk assessment for asylum processing. These applications involve vulnerable populations and create irreversible consequences.

- Justice and democracy: AI assisting judicial authorities in legal research and interpretation, dispute resolution assistance, and systems potentially influencing election outcomes.

Important Exemption: Article 6(3) Derogation. A system listed in Annex III is NOT high-risk if it performs only narrow procedural tasks (spam filtering in HR email), improves human-completed work without replacing judgment (spell-checker for judges), detects patterns without influencing decisions (analyzing aggregate grading trends), or performs purely preparatory tasks (file format conversion). Critical limitation: systems performing profiling of natural persons can never benefit from this derogation.

Providers relying on Article 6(3) derogation must document their assessment and provide documentation to authorities on request. Some cases require EU database registration indicating the derogation. This creates a compliance obligation even for systems claiming exemption — you must prove why you qualify.

Limited Risk: Transparency Requirements

AI systems interacting directly with users or generating synthetic content face mandatory disclosure obligations effective August 2, 2026. These requirements don't impose the full technical documentation burden of high-risk systems, but create legal obligations you cannot ignore.

- Chatbots and conversational AI must inform users they are interacting with AI unless it's obvious from context. The standard applies to customer service bots, virtual assistants, and any AI system engaging in natural language conversation.

- Deepfakes and synthetic media must be labeled as artificially generated in machine-readable format. This applies to AI-generated images, videos, audio, and text content. The machine-readable requirement means metadata tagging, not just visible watermarks.

- Emotion recognition systems must inform exposed individuals when operational, except when used for medical or safety purposes. Systems deployed in permitted contexts (retail analytics, public safety monitoring) require clear notice to affected individuals.

- AI-generated text on public matters must disclose artificial generation unless under human editorial review. This targets automated content generation in news, political communication, and public discourse.

Penalty for violations: €15 million or 3% of global annual turnover. Limited-risk obligations carry the same penalty tier as high-risk compliance failures, signaling transparency is not optional.

Minimal Risk: Voluntary Measures Only

AI systems not falling into higher categories face no mandatory obligations but may adopt voluntary codes of conduct. This includes spam filters, recommendation engines (not using sensitive profiling), inventory management systems, video games, and standard business process automation not involving human assessment.

Organizations should still document why systems qualify as minimal risk. The absence of mandatory obligations doesn't mean absence of due diligence. Classification disputes with regulators require evidence demonstrating your system doesn't fall into higher-risk categories.

Systematic Risk Classification: The Decision Process

Organizations should evaluate each AI system using this hierarchical assessment. Work through the decision tree systematically — earlier determinations control later analysis.

Step 1: Jurisdictional scope. Is your AI system used in the EU market or does its output affect persons in the EU? US-based company using AI to assess credit applications for Dublin customers? You're in scope. Singapore-based recruitment platform processing applications for London positions? You're in scope. The extraterritorial reach mirrors GDPR — if your AI impacts EU persons, the regulation applies regardless of where you're located.

If the answer is no — system used exclusively outside EU with no EU impact — the AI Act doesn't apply. Document this determination because you'll need to justify it if operations expand.

Step 2: Prohibited practices check. Does the system use prohibited techniques from Article 5? Review each category systematically:

- Subliminal manipulation beyond conscious awareness?

- Exploitation of vulnerabilities (age, disability, economic situation)?

- Social scoring by or for public authorities?

- Real-time biometric identification in public spaces?

- Biometric categorization inferring sensitive characteristics?

- Emotion recognition in workplace or education?

- Untargeted facial scraping for database building?

If yes to any category: PROHIBITED. Discontinue immediately. You cannot mitigate or compliance-check your way to legality. The prohibition is absolute. Document decommissioning decisions and ensure no similar systems are in development.

Step 3: High-risk pathway assessment. If not prohibited, determine whether the system qualifies as high-risk through either pathway.

Pathway A: Annex I regulated product. Is the system a safety component of a product covered by Annex I harmonization legislation (machinery, medical devices, vehicles, aviation, toys, lifts)? Does it require third-party conformity assessment under that sectoral legislation? If yes: HIGH-RISK via Annex I. Compliance deadline August 2, 2027. Contact your Notified Body regarding dual accreditation status for both sectoral regulation and AI Act.

Pathway B: Annex III stand-alone system. Is the system used in one of the eight Annex III domains? If yes, proceed to Article 6(3) derogation analysis. Does the system perform ONLY narrow procedural tasks, improvement of completed human work without replacing judgment, pattern detection without influencing decisions, or preparatory activities — AND does the system avoid profiling natural persons?

- If derogation applies: Document exemption reasoning. Register in EU database indicating Article 6(3) derogation. Apply LIMITED RISK transparency requirements if system interacts with users or generates content.

- If derogation does NOT apply: HIGH-RISK via Annex III. Full compliance required. Deadline August 2, 2026.

Step 4: Limited risk transparency check. If not high-risk, does the system interact directly with users (chatbots, virtual assistants), generate synthetic content (deepfakes, AI-generated media), or perform emotion recognition in permitted contexts? If yes: LIMITED RISK. Transparency obligations apply. Compliance deadline August 2, 2026.

Step 5: Minimal risk determination. If none of the above apply: MINIMAL RISK. No mandatory obligations. Consider voluntary codes of conduct. Document classification reasoning for future reference.

Mandatory Documentation for High-Risk Systems

Article 11 and Annex IV establish exhaustive technical documentation requirements that must be prepared before market placement and maintained throughout the system's lifecycle. This isn't documentation you can assemble retroactively — the requirements cover development decisions, data governance, testing results, and design rationale that must be captured during development.

The documentation serves multiple purposes: demonstrating conformity during assessment, enabling market surveillance authority review, supporting post-market monitoring, and providing deployers with information needed for proper system use. Incomplete documentation renders conformity assessment impossible and creates immediate non-compliance.

Section 1: General System Description

System identity and versioning. Document intended purpose, provider identity and contact information, system version history with change logs, and complete specifications. This creates the foundation for traceability. When incidents occur or updates deploy, authorities need clear version identification.

Technical architecture and dependencies. Describe all hardware and software dependencies, integration requirements and APIs, computational resources required for operation, and system architecture diagrams showing component integration. Document whether the system operates standalone, requires cloud connectivity, processes data locally or remotely, and depends on specific hardware (GPUs, specialized processors).

Market placement forms. Specify all forms of market access: downloadable software, API access, embedded in hardware products, or software-as-a-service. Each distribution method may create different compliance obligations, particularly regarding instructions for use and human oversight measures.

User interface and deployer instructions. Provide complete user interface description including screenshots and interaction flows. Document deployer instructions covering installation, configuration, operation, monitoring, and troubleshooting. Include clear guidance on human oversight requirements specific to the deployment context.

Physical components. For systems with hardware components, include photographs, internal layout diagrams, and physical specifications. Medical devices, robotics, and embedded systems require comprehensive hardware documentation.

Section 2: Development Process Documentation

Development methodology and lifecycle. Document the development methodology used (waterfall, agile, DevOps integration), key development steps and decision points, design specifications including system logic and algorithmic approach, and rationale for major design choices. Regulators want to understand why you made specific design decisions, not just what the final system does.

Pre-trained models and third-party tools. Identify any pre-trained models used as foundation (GPT, BERT, ResNet), third-party tools or libraries integrated, training frameworks (TensorFlow, PyTorch), and external services or APIs consumed. Document version numbers and update policies for third-party components — supply chain traceability matters.

Algorithmic design specifications. Provide detailed description of algorithms implemented, classification approaches and model architectures, optimization objectives and loss functions, parameter relevance and sensitivity analysis, and computational complexity analysis. This goes beyond high-level descriptions — authorities need sufficient technical detail to evaluate the system.

System architecture integration. Create diagrams showing how components integrate, data flows between components, external system interfaces, and security boundaries. Document which components involve AI processing versus traditional logic, and where human oversight points integrate into the architecture.

Computational resources. Document computational resources used for development, training, and testing. This includes hardware specifications (CPU/GPU configurations), cloud computing resources consumed, training time and costs, and environmental impact considerations.

Section 3: Data Governance Documentation (Model Card Requirements)

Data governance represents one of the most detailed documentation requirements. Article 10 imposes strict data quality obligations, and Annex IV requires comprehensive documentation demonstrating compliance.

Training data provenance. Document origin and sources of all training data, scope and scale (number of samples, data types), collection methods and timeframes, data acquisition legal basis (particularly for personal data), and rights to use data for training purposes. The untargeted facial scraping prohibition makes provenance documentation critical — you must prove your training data doesn't violate Article 5.

Data selection criteria and rationale. Explain selection criteria for included versus excluded data, filtering rules applied during curation, sampling strategies for large datasets, and rationale for coverage decisions. If your training data excludes certain populations or scenarios, document why and assess implications for performance across use contexts.

Labeling procedures and annotation protocols. Provide detailed annotation guidelines provided to labelers, labeler training and qualification processes, inter-annotator agreement metrics, quality control procedures, and handling of ambiguous or uncertain cases. Label quality directly impacts model behavior — sloppy annotation creates compliance risk.

Data cleaning methodologies. Document outlier detection and handling approaches, missing data treatment, error correction procedures, duplicate removal strategies, and normalization or preprocessing steps. Explain why specific cleaning choices were made and how they impact representativeness.

Bias examination results and mitigation measures. This is critical. Document statistical analysis of training data demographics, identification of underrepresented or overrepresented groups, specific biases discovered, mitigation measures implemented (resampling, reweighting, synthetic data), and post-mitigation validation results. Article 10(5) explicitly authorizes processing special category personal data (race, ethnicity) for bias detection purposes — use this legal basis to conduct thorough bias analysis.

Data gap identification and remediation plans. Identify known gaps in training data coverage, populations or scenarios underrepresented, limitations in geographic or demographic diversity, and remediation plans for addressing gaps. Document trade-offs between perfect representativeness (often impossible) and practical data availability.

Statistical properties validation. Demonstrate training data statistical properties match target deployment populations. Document distribution analyses comparing training data to expected real-world inputs, validation that key features exhibit appropriate ranges and relationships, and testing of data quality metrics (completeness, accuracy, consistency).

GDPR Integration Note: The data governance documentation must satisfy both AI Act requirements and GDPR obligations when personal data is involved. Create unified documentation addressing both frameworks rather than separate artifacts that might contradict each other.

Section 4: Validation and Testing

Testing procedures and protocols. Document comprehensive testing methodology including test planning, test case development, testing phases (unit, integration, system, acceptance), and pass/fail criteria. Specify testing environments (simulated versus real-world data), testing timelines, and responsible parties.

Performance metrics and accuracy measurements. Provide detailed accuracy measurements across relevant metrics (precision, recall, F1, AUC-ROC), performance broken down by demographic subgroups, comparison against baseline or alternative approaches, and statistical confidence intervals. Article 15 requires "appropriate level of accuracy" — you must define what "appropriate" means for your use case and demonstrate achievement.

Robustness testing results. Document testing under adverse conditions: noisy or corrupted inputs, out-of-distribution data, edge cases and boundary conditions, and stress testing under high load. Show the system handles errors, faults, and inconsistencies without catastrophic failure.

Potentially discriminatory impact assessments. Assess fairness metrics across protected characteristics (where lawful to collect), disparate impact analysis comparing outcomes across groups, investigation of discriminatory patterns, and validation that differences in performance don't create unjustified disparate treatment.

Test logs and documentation. Maintain all test logs with dates, versions tested, tester identification, and results. These logs must be signed and dated — authentication matters for conformity assessment. Authorities may request historical test records to verify continuous monitoring during development.

Cybersecurity measures documentation. Document security testing procedures, penetration testing results, adversarial robustness testing (adversarial examples, data poisoning resistance), and implemented security controls. Article 15 explicitly requires resilience against attacks attempting to alter system use or performance.

Section 5: Performance Capabilities and Limitations

System capabilities and performance boundaries. Clearly define what the system can and cannot do. Document intended use cases, performance characteristics under normal operation, expected accuracy ranges, processing latency and throughput, and limitations on input types or formats.

Known accuracy limitations for specific groups. Identify any demographic groups or scenarios where accuracy is lower than baseline, quantify performance differences, explain causes of limitations, and provide guidance on mitigation or alternative approaches. This documentation supports deployers in understanding where human oversight becomes particularly critical.

Foreseeable unintended outcomes. Document potential misuse scenarios, predictable failure modes, consequences of incorrect outputs, and risks from over-reliance or automation bias. The risk management system (Article 9) considers "reasonably foreseeable misuse" — this section documents those scenarios.

Risk sources for health, safety, fundamental rights. Identify specific risks the system poses to health (physical or mental), safety (personal or public), fundamental rights (privacy, non-discrimination, due process), and vulnerable populations. Connect these risks to the Article 9 risk management system demonstrating how identified risks are mitigated.

Section 6: Compliance and Standards

Risk management system description. Provide complete documentation of the Article 9 risk management system: methodology used, risk identification and analysis procedures, risk evaluation criteria, mitigation hierarchy implementation, residual risk assessment, and continuous monitoring processes. Reference ISO 31000 or ISO/IEC 23894 if used as methodology foundation.

Applied harmonized standards. List all harmonized standards applied with specific references to Official Journal publications. Document which standards apply to which system components or requirements. Note: as of early 2025, AI Act-specific harmonized standards are still under development.

Solutions where standards not fully applied. When harmonized standards don't exist or aren't fully applied, document alternative approaches demonstrating equivalent protection. Explain why standard wasn't applied and justify chosen solution.

EU Declaration of Conformity copy. Include copy of signed EU Declaration of Conformity — the legal statement asserting system compliance with all applicable requirements. This declaration authorizes CE marking and enables market placement.

Post-market monitoring system description. Document Article 72 post-market monitoring system: metrics collected from deployed systems, data collection mechanisms, analysis procedures for identifying performance degradation or emerging risks, feedback loops from deployers, and incident reporting procedures.

Retention Requirement: 10 Years. All technical documentation must be maintained for 10 years after the system is placed on the market or put into service. This extends beyond typical software lifecycle management — plan for long-term documentation retention including archived versions.

Conformity Assessment Pathways

Before placing a high-risk AI system on the market, you must complete conformity assessment verifying compliance with Chapter III requirements. The pathway you follow depends on system type, whether you've applied harmonized standards, and specific use case.

Internal Control (Annex VI)

Internal control allows provider self-assessment without third-party auditor involvement. This significantly reduces costs and timelines but requires meeting specific conditions.

Process steps. The provider compiles technical documentation per Annex IV, implements Quality Management System per Article 17, conducts internal compliance verification, signs EU Declaration of Conformity, affixes CE marking, and registers system in EU database.

Applicability conditions. Internal control is available for Annex III high-risk systems (employment, education, essential services, law enforcement, migration, justice) PROVIDED you've fully applied harmonized standards covering all relevant requirements. Once these standards are published in the Official Journal (expected throughout 2025–2026), internal control becomes the default pathway for most business-context AI systems.

Critical limitation. If harmonized standards don't exist, aren't complete, or you haven't fully applied them, you cannot use internal control — third-party assessment becomes mandatory. Early adopters implementing systems before standards publication face this challenge.

Cost estimate. Internal control costs primarily reflect internal resource allocation: technical documentation preparation (€10,000–€30,000 depending on complexity), QMS implementation (€193,000–€330,000 initial setup if not existing), and internal testing and validation (€5,000–€15,000). Total: approximately €210,000–€375,000 for organizations without existing QMS, or €15,000–€45,000 for subsequent systems once QMS is established.

Third-Party Assessment (Annex VII)

Third-party assessment involves independent Notified Body review of your Quality Management System and technical documentation. This pathway is mandatory for specific system types and when internal control doesn't apply.

Mandatory scenarios. Third-party assessment is required for:

- All biometric identification systems (remote biometric identification as listed in Annex III point 1)

- Any Annex III system where harmonized standards aren't applied or don't exist

- Provider choice when harmonized standards are applied but provider prefers external validation

Process steps. Provider submits application to chosen Notified Body, provides access to technical documentation and QMS, Notified Body conducts documentation review and (if needed) on-site assessment, Notified Body issues EU Technical Documentation Assessment Certificate (valid 5 years) or identifies non-conformities requiring correction, provider corrects deficiencies and resubmits, upon certificate issuance provider signs Declaration of Conformity and affixes CE marking.

Notified Body selection. Member States designate Notified Bodies with specific AI Act accreditation. As of early 2025, the number of designated Notified Bodies remains limited, creating potential bottlenecks. Providers should identify and contact potential Notified Bodies early — waiting until months before the August 2026 deadline risks inability to secure assessment slots.

Cost estimate. Third-party assessment costs range from €16,000 to €23,000 per system for standard assessments, potentially reaching €50,000+ for highly complex systems requiring extensive review. Add timeline of 3–6 months for initial assessment, longer if corrections are needed.

Certificate validity and renewal. EU Technical Documentation Assessment Certificate remains valid 5 years from issuance. Renewal requires demonstrating continued compliance, accounting for any system modifications during the validity period. Significant system changes may trigger re-assessment before certificate expiration.

Sector-Specific Conformity Assessment

High-risk AI systems qualifying via Annex I pathway (medical devices, vehicles, machinery, aviation, toys, lifts) undergo conformity assessment through existing sectoral procedures rather than AI Act-specific pathways.

Integration approach. The AI Act requirements integrate into existing conformity assessment under sectoral legislation. Medical device Notified Bodies assess both Medical Device Regulation (MDR) compliance AND AI Act compliance in unified assessment. Technical documentation can be combined — Annex IV requirements integrate into MDR technical files.

Dual accreditation requirement. Notified Bodies must hold accreditation for both the sectoral legislation (MDR, automotive regulations, etc.) and AI Act. Contact your existing Notified Body to confirm they're pursuing AI Act accreditation — you may need to identify alternative or additional Notified Bodies if your current partner lacks dual accreditation.

Extended timeline. Annex I systems face August 2, 2027 deadline — one year later than Annex III systems. This recognizes the complexity of integrating AI Act requirements with existing sectoral compliance programs.

Risk Management System Requirements

Article 9 mandates continuous, lifecycle-spanning risk management for all high-risk AI systems. This isn't a one-time assessment at launch — you must maintain active risk management throughout operation, identifying emerging risks and implementing mitigation measures as understanding evolves.

Eight-Step Risk Management Methodology

Step 1: Establish the risk management system. Document a risk management system covering the entire AI lifecycle from initial conception through development, deployment, operation, monitoring, updating, and eventual decommissioning. Align with ISO 31000 (general risk management) or ISO/IEC 23894 (AI-specific risk management) as methodology foundation. Define roles and responsibilities for risk management. Identify who performs risk assessments, who approves risk acceptance decisions, who monitors deployed systems for emerging risks, and who authorizes risk mitigation measures.

Step 2: Identify and analyze risks. Catalog all known and reasonably foreseeable risks to health, safety, and fundamental rights. Consider both intended use scenarios and reasonably foreseeable misuse. Don't limit risk identification to technical failures — include risks from correct operation producing discriminatory outcomes, privacy violations from inference on sensitive characteristics, or safety impacts from over-reliance on system outputs. Use structured techniques: hazard analysis (HAZOP), failure mode and effects analysis (FMEA), threat modeling, and scenario planning.

Step 3: Estimate and evaluate risks. Quantify probability and severity for each identified risk. Use consistent risk matrices defining severity levels (negligible, minor, moderate, major, catastrophic) and probability levels (rare, unlikely, possible, likely, almost certain). Evaluate risks under both normal operation and misuse conditions.

Step 4: Integrate post-market monitoring data. Article 72 post-market monitoring generates real-world performance data revealing risks not apparent during pre-market testing. Create feedback loop from post-market monitoring into risk management system. Update risk profiles based on actual field performance, reported incidents, user feedback, and observed misuse patterns. This step distinguishes AI Act risk management from traditional product safety approaches.

Step 5: Adopt risk management measures using priority hierarchy. The AI Act establishes clear mitigation hierarchy:

- First priority: Eliminate risks through design. Modify system architecture, algorithm choice, or technical approach to eliminate risk at source.

- Second priority: Mitigate unavoidable risks through protective measures. When elimination isn't feasible, implement controls reducing risk probability or severity — confidence thresholds triggering human review, ensemble methods, or technical controls preventing out-of-scope operation.

- Third priority: Inform deployers of residual risks. Article 13 instructions for use must clearly communicate residual risks remaining after mitigation.

- Fourth priority: Train deployers on proper system use. Provide training addressing identified risks, proper operation within system limitations, recognition of inappropriate use cases, and response to system failures.

Step 6: Assess residual risk acceptability. After applying mitigation measures, assess whether residual risks are acceptable. Consider both individual hazard residual risks AND overall system residual risk. Document risk acceptance decisions — who made the determination, against what criteria, and what assumptions underlie acceptance.

Step 7: Conduct testing against predefined metrics. Test risk mitigation effectiveness against predefined metrics and probabilistic thresholds. Testing occurs throughout development (unit, integration, system) and before market placement (acceptance testing). Article 60 permits real-world testing under specific conditions — pilot deployments in controlled environments provide valuable risk validation.

Step 8: Specifically assess impacts on vulnerable groups. Article 9 explicitly requires assessing adverse impacts on persons under 18 and other vulnerable populations: children and adolescents, elderly persons, persons with disabilities (physical, cognitive, sensory), persons with limited technical literacy, persons from marginalized communities, persons in positions of dependency (incarcerated, institutionalized). Vulnerable groups often experience disproportionate impacts from AI system risks.

Fundamental Rights Impact Assessment (FRIA)

While providers handle technical compliance, deployers face their own obligations. Article 27 mandates Fundamental Rights Impact Assessment (FRIA) for deployers who are public authorities, private entities providing public services, or users of specific high-risk systems (credit scoring Annex III.5.b, life/health insurance risk assessment Annex III.5.c).

FRIA versus provider risk management. Provider risk management assesses risks inherent in the AI system under intended use. FRIA assesses risks created by specific deployment in actual operational context. A credit scoring model might meet all provider obligations but create discriminatory impacts when deployed in a specific demographic context — FRIA reveals deployment-specific risks.

FRIA must document:

- Deployment description. Describe deployer's processes where AI will be used, decision-making workflows, integration with existing systems, and how AI outputs integrate into final decisions.

- Temporal and population scope. Document period of intended use, frequency of system use, categories of natural persons affected, and expected volume (number of persons affected annually).

- Specific fundamental rights risks. Assess risks of harm to: right to non-discrimination (Article 21 EU Charter), right to privacy and data protection (Articles 7–8), right to human dignity (Article 1), rights of the child (Article 24), right to fair trial and defense (Articles 47–48), right to good administration (Article 41). Don't provide generic analysis — identify specific risks in your deployment context.

- Human oversight implementation measures. Detail who performs oversight, what information they receive, what decision authority they retain, how you prevent automation bias, and what training ensures effective oversight.

- Remediation measures if risks materialize. Document procedures for responding when fundamental rights violations occur — appeal mechanisms, decision reversal processes, compensation, explanation rights.

- Internal governance arrangements. Describe organizational structures supporting fundamental rights protection: responsible departments, reporting lines, decision-making authority for system use limitations.

- Complaint mechanisms. Establish accessible, effective complaint procedures for affected persons.

FRIA submission. Deployers must submit FRIA results to market surveillance authority before system deployment using EU AI Office template (expected 2025). Update FRIA when circumstances materially change — different deployment context, different affected population, or identified risks not previously assessed.

Implementation Timeline and Compliance Roadmap

The staggered timeline creates triage imperative. You cannot address all requirements simultaneously — prioritize based on enforcement dates and system risk classification.

Master Enforcement Calendar

| Date | Milestone | Key Obligations |

|---|---|---|

| August 1, 2024 | Entry into force | Clock started; Member States begin designating authorities |

| February 2, 2025 | Prohibited practices enforceable | Article 5 bans in force (€35M/7%); AI literacy obligations (Article 4) |

| May 2, 2025 | GPAI Codes of Practice finalized | Voluntary compliance framework for general-purpose AI models |

| August 2, 2025 | Governance and GPAI obligations | National authorities operational; full penalty regime; GPAI transparency and systemic risk assessment |

| February 2, 2026 | Commission guidelines | Official guidance on risk classification with concrete examples |

| August 2, 2026 | Primary enforcement date | High-risk Annex III systems fully enforceable; limited-risk transparency obligations; sandboxes operational |

| August 2, 2027 | Extended product deadline | High-risk AI in regulated products (medical devices, vehicles, machinery, aviation, toys, lifts) |

| August 2, 2030 | Public authority deadline | High-risk AI used by public authorities |

Compliance Timeline by Organization Size

Large enterprises (>250 employees, >€50M revenue) face compressed timelines requiring immediate action and significant resource allocation.

- Q1–Q2 2025: Assessment phase. Complete comprehensive AI system inventory across all business units and geographies. Conduct preliminary risk classification using decision tree. Perform gap analysis comparing current AI governance against AI Act requirements. Identify prohibited systems requiring immediate decommissioning. Estimate compliance costs and resource requirements.

- Q3–Q4 2025: Foundation phase. Establish or upgrade Quality Management System to meet Article 17 requirements, ideally achieving ISO 42001 certification. Assign compliance roles including AI governance committee, risk management owners, technical documentation leads, and conformity assessment coordinators. Begin technical documentation for highest-priority high-risk systems. Decommission or remediate systems failing prohibited practices check. Implement AI literacy training programs.

- Q1–Q2 2026: Implementation phase. Complete conformity assessments for all high-risk systems. Finalize technical documentation. Train deployers on human oversight requirements and system limitations. Register systems in EU database per Article 71. Implement transparency requirements for limited-risk systems. Conduct FRIAs for deployments requiring assessment.

- Q3 2026 onward: Operational phase. Launch post-market monitoring systems. Establish incident reporting procedures aligned to Article 73. Maintain and update technical documentation as systems evolve. Conduct periodic QMS audits. Monitor regulatory developments.

Mid-size companies (50–250 employees) follow similar phasing with compressed timelines and selective prioritization. Adapt existing quality management systems (ISO 9001, sector-specific QMS) rather than building from scratch. Consider selective decommissioning of marginal AI applications where compliance costs exceed business value.

SMEs and startups (<50 employees) benefit from specific accommodations but face resource constraints. Use regulatory sandbox access for testing in controlled environments. Apply for reduced conformity assessment fees (provisions for SMEs in implementing acts). Consider whether the Digital Omnibus proposed 12-month extension for companies <50 employees applies (subject to final adoption).

Compliance Cost Analysis and Business Impact

Understanding the financial implications of compliance is critical for resource planning and leadership buy-in. Costs vary dramatically based on organization size, number of high-risk systems, maturity of existing governance programs, and strategic approach to compliance.

Per-System Cost Estimates

| Cost Item | Estimate | Notes |

|---|---|---|

| Annual compliance per high-risk system | €29,277 | Recurring annual cost after initial setup (CEPS/Commission Impact Assessment) |

| Total annual governance per AI model | €52,227 | Comprehensive governance costs including organizational overhead, legal review |

| Third-party conformity assessment | €16,800–€23,000 | Standard complexity; highly complex systems may reach €50,000+ |

| QMS setup (no existing QMS) | €193,000–€330,000 | One-time; organizations with ISO 9001 reduce by 40–60% |

| Annual QMS maintenance | €71,400 | Internal audits, management reviews, corrective actions |

| Compliance as % of development costs | ~17% | Organizations with mature governance reduce to 7–12% |

Organization-Scale Cost Projections

Large enterprises (>250 employees). Initial investment: €8–15 million. Annual ongoing: €2–5 million. Key cost drivers: multiple high-risk systems across diverse use cases (typical large enterprise deploys 20–50+ AI systems), dedicated compliance FTEs (2–5 at €150–250K total compensation each), third-party conformity assessments for biometric systems and complex applications. Organizations with mature AI governance programs realize 40–50% lower costs by leveraging existing infrastructure.

GPAI model providers. Initial investment: €12–25 million. Annual ongoing: €3–8 million. Key cost drivers: foundation model documentation (training data provenance at massive scale), systemic risk assessment and red teaming programs, Code of Practice compliance, cybersecurity enhancements, copyright compliance and content provenance tracking. Systemic risk designation (>10²⁵ FLOPs or Commission designation) triggers additional obligations substantially increasing costs.

Mid-size companies (50–250 employees). Initial investment: €2–5 million. Annual ongoing: €500K–2 million. Benefit from more focused AI portfolios and ability to selectively prioritize highest-value systems while potentially sunsetting marginal applications where compliance costs exceed benefit.

SMEs (<50 employees). Initial investment: €100–400K. Annual ongoing: €50–150K. SME accommodations significantly reduce burden, but compliance still represents substantial percentage of revenue for startups. Some may find pivoting to minimal-risk applications more viable than high-risk compliance.

Market Opportunities

The compliance burden creates substantial market for trustworthy AI services projected at €17 billion by 2026. Opportunities include:

- Auditing and conformity assessment: Notified Bodies, consulting firms, and specialized AI auditing companies provide third-party assessment services. Shortage of qualified assessors creates premium pricing.

- Compliance technology platforms: Software platforms automating documentation management, risk assessment, post-market monitoring, and compliance tracking reduce manual effort.

- Bias detection and mitigation services: Article 10 data governance and fairness requirements drive demand for bias testing, fairness metrics, and mitigation techniques.

- Synthetic data generation: Training data quality requirements combined with GDPR constraints increase demand for synthetic data satisfying statistical requirements without privacy concerns.

- AI governance consulting: Legal, technical, and strategic consulting helping organizations design governance frameworks, classify systems, and implement compliance programs.

- Training and education: Article 4 AI literacy requirements create demand for training programs across technical staff, deployers, governance personnel, and leadership.

Penalty Structure and Enforcement Architecture

The penalty framework exceeds GDPR in maximum fines, signaling the European Union's commitment to strict enforcement. Organizations must understand both penalty tiers and enforcement mechanisms.

Tiered Penalty Framework

| Tier | Violation Type | Maximum Penalty | Examples |

|---|---|---|---|

| Tier 1 | Prohibited practices (Article 5) | €35 million OR 7% global turnover | Social scoring, real-time biometric ID in public spaces, workplace emotion recognition, facial scraping |

| Tier 2 | High-risk obligations (Chapter III) | €15 million OR 3% global turnover | No conformity assessment, missing technical documentation, inadequate risk management, no human oversight |

| Tier 3 | Transparency violations | €15 million OR 3% global turnover | Chatbot not disclosing AI nature, unmarked deepfakes, emotion recognition without notice |

| Tier 4 | Misleading information to authorities | €7.5 million OR 1% global turnover | False conformity assessment statements, incomplete documentation, misleading responses to investigations |

SME Protection Provision. For SMEs and startups, regulators must impose the LOWER of fixed amount or turnover percentage. For a startup with €5 million revenue facing Tier 2 violation: 3% turnover equals €150,000, which is lower than the €15 million fixed amount, so €150,000 applies. This creates dramatically different penalty exposure based on organization size — an intentional policy choice balancing enforcement against economic viability for smaller players.

Enforcement Architecture

EU AI Office. Central coordinating body operational August 2, 2025. Primary responsibilities: enforcement authority for general-purpose AI models (particularly systemic risk GPAI), coordination of cross-border enforcement, development of guidance and technical standards, maintenance of EU database, support to Member State authorities. The AI Office functions as regulatory hub similar to EDPB role in GDPR enforcement.

National competent authorities. Each Member State designates market surveillance authorities responsible for enforcement within their jurisdiction. National authorities handle most AI systems enforcement except GPAI. Germany designated Bundesnetzagentur (Federal Network Agency), France designated CNIL (data protection authority), Spain designated AEPD (data protection authority). Other Member States follow similar patterns designating existing regulatory bodies with technical capacity.

European AI Board. Coordinates cross-border enforcement ensuring consistent application across Member States. Board comprises representatives from national authorities, Commission, EU AI Office, EDPS, and stakeholder representatives. Issues opinions on classification questions, harmonized standards, and enforcement approaches.

Coordination with sectoral regulators. Financial services enforcement involves EBA (banking), ESMA (securities), EIOPA (insurance). Medical device enforcement involves national medical device authorities. The Act requires coordination between AI Act enforcers and existing sectoral authorities to prevent gaps or conflicts.

Enforcement status (early 2025). No fines issued yet. Prohibited practices became enforceable February 2, 2025. Broader penalty regime activates August 2, 2025. Main enforcement wave expected post-August 2026. Early enforcement likely focuses on egregious prohibited practices violations and failure to decommission banned systems.

"Expect enforcement to follow GDPR pattern: initial guidance period with relatively few fines, then increasing enforcement activity as authorities build capacity and organizations exhaust excuse of novelty. Organizations cannot count on enforcement grace period — authorities may make examples of clear violations to establish credibility."

Sector-Specific Compliance Guidance

While the AI Act applies horizontally across industries, specific sectors face unique compliance challenges requiring tailored approaches.

Healthcare AI and Medical Devices

AI systems qualifying as medical devices under Medical Device Regulation (EU) 2017/745 or In Vitro Diagnostic Regulation (EU) 2017/746 face dual compliance requirements. The AI Act and MDR/IVDR operate in parallel, with integrated conformity assessment.

Key integration points. Technical documentation can be combined into single integrated file satisfying both MDR Annex II/III and AI Act Annex IV. Cross-reference between documents rather than duplicating content. Notified Bodies with dual accreditation perform joint conformity assessments evaluating both clinical safety (MDR) and AI-specific requirements (AI Act) in unified process.

Extended compliance timeline applies: August 2, 2027 for AI embedded in regulated medical devices. Risk management must address both clinical safety and AI-specific risks. ISO 14971 (medical device risk management) provides foundation, supplemented with AI-specific considerations: bias creating differential clinical performance across patient populations, model drift degrading diagnostic accuracy, adversarial robustness against deliberate manipulation, performance degradation from distribution shift as disease prevalence changes.

Required actions. Conduct gap analysis comparing MDR technical files against AI Act Annex IV requirements. Integrate AI-specific controls into ISO 13485 Quality Management System — most medical device manufacturers already operate ISO 13485 QMS satisfying substantial portion of AI Act Article 17 requirements. Contact Notified Body regarding dual accreditation status. Plan for joint assessment approach rather than separate parallel assessments.

Post-market surveillance integration. MDR post-market surveillance requirements (Article 83–86) and AI Act post-market monitoring (Article 72) overlap substantially. Create unified post-market program satisfying both regulations. Medical device vigilance reporting timelines often shorter than AI Act serious incident reporting — use shorter timeline to ensure compliance with both frameworks.

Financial Services AI

Credit scoring and insurance risk assessment are explicitly classified as high-risk under Annex III, making financial institutions primary targets for AI Act enforcement. Financial regulators (EBA, ESMA, EIOPA) serve as market surveillance authorities for AI Act enforcement in the financial sector.

Regulatory overlap management. Multiple frameworks intersect:

- GDPR Article 22 provides right not to be subject to solely automated decision-making with legal/similarly significant effects. AI Act human oversight requirements (Article 14) run parallel but not identical to GDPR Article 22. Financial institutions must implement human review mechanisms satisfying both frameworks.

- DORA (Digital Operational Resilience Act) requires financial entities to manage ICT third-party risk including AI service providers. DORA third-party risk management extends AI Act provider/deployer relationship obligations.

- Capital Requirements Directive record-keeping and documentation requirements for credit risk models align with AI Act technical documentation. Banks already maintain model documentation for regulatory capital purposes — extend existing documentation to cover AI Act-specific requirements.

ECB 2025 findings indicate approximately 50% of banks have dedicated AI policies, but significant governance gaps remain. Common weaknesses: inadequate model risk management for AI/ML models, insufficient fairness testing across demographic groups, weak human oversight over credit decisions, incomplete documentation of model development and validation.

Required actions. Map AI systems against Annex III categories 5(b) credit scoring and 5(c) insurance risk assessment. Implement explainability for credit decisions using Explainable AI (XAI) techniques: SHAP (SHapley Additive exPlanations) providing feature importance explanations, LIME (Local Interpretable Model-agnostic Explanations) generating local approximations, attention mechanisms showing which input features drove predictions, and counterfactual explanations demonstrating what would change a decision outcome. Establish human review mechanisms for adverse actions. Integrate AI governance into existing financial compliance frameworks such as Basel Committee model risk management frameworks.

Law Enforcement and Biometrics

Law enforcement agencies face strictest controls under AI Act, with most real-time biometric identification prohibited and extensive safeguards required for permitted uses. Biometric systems require mandatory third-party conformity assessment regardless of harmonized standards application.

Permitted high-risk uses with full compliance: post-event facial recognition for serious crime investigation (crimes listed in Annex II with minimum 3-year imprisonment), crime victim risk assessment, evidence reliability evaluation, and criminal recidivism assessment when not solely based on profiling.

Mandatory safeguards for real-time biometric identification exceptions. Article 5(1)(h) permits real-time RBI under narrow exceptions but requires comprehensive safeguards:

- Prior authorization. Judicial or independent administrative authority must authorize use before deployment in each specific case. Authorization must be time-limited, geographically bounded, and specify legitimate objective. No blanket authorizations for general surveillance.

- Fundamental Rights Impact Assessment. Article 27 FRIA mandatory before deployment. Must evaluate impact on presumption of innocence, right to privacy, potential discriminatory effects, and proportionality.

- EU database registration. Each use must be registered in EU database (Article 71).

- Scope limitations. Authorization must specify precise geographic scope, temporal scope, and personal scope.

- Annual reporting. Law enforcement agencies must report annually to national authority and Commission on: number of times real-time RBI used, legal basis, purposes and results, evaluation of necessity and proportionality.

Critical Infrastructure AI

AI systems serving as safety components in water, gas, electricity, heating supply, and road traffic management are classified high-risk due to potential for large-scale harm from failure. Critical infrastructure designation triggers both AI Act requirements and NIS2 Directive cybersecurity obligations.

Specific requirements beyond standard high-risk compliance:

- Comprehensive failure mode analysis. Risk management must address cascading failure scenarios. Model failure modes: incorrect predictions causing inappropriate control actions, adversarial attacks manipulating sensor inputs, data poisoning corrupting training data, availability attacks preventing system operation during critical periods.

- Article 15 cybersecurity resilience testing. Critical infrastructure systems must undergo enhanced cybersecurity testing including penetration testing, adversarial machine learning attacks, resilience testing under degraded conditions, and recovery testing demonstrating graceful degradation.

- Manual override capability. Human oversight for critical infrastructure must include manual override allowing operators to disable AI and revert to manual control. Operators require real-time visibility into AI decision-making and confidence levels.

- NIS2 Directive integration. Critical infrastructure operators subject to NIS2 (Directive 2022/2555) must integrate AI Act compliance into NIS2 cybersecurity risk management. NIS2 requires comprehensive cybersecurity risk assessment, incident reporting within 24 hours (early warning) and 72 hours (detailed report), business continuity including backup systems, and supply chain security for AI providers.

- Serious incident reporting — 2-day timeline. Critical infrastructure disruptions constitute serious incidents requiring reporting to market surveillance authority within 2 days under Article 73(1). This is the shortest reporting timeline in AI Act, reflecting criticality.

Framework Comparisons and Alignment Mapping

The AI Act doesn't exist in isolation. Organizations operating globally must navigate multiple AI governance frameworks requiring strategic alignment approach rather than separate parallel compliance programs.

EU AI Act vs. GDPR Alignment

Both regulations apply when AI processes personal data, creating overlapping requirements requiring careful integration.

Scope relationship. GDPR applies to personal data processing regardless of whether AI is involved. AI Act applies to AI systems regardless of whether personal data is processed. When AI processes personal data: both regulations apply simultaneously. When AI processes only non-personal data (industrial sensor data, fully anonymized data): only AI Act applies.

Risk assessment approaches. GDPR requires Data Protection Impact Assessment (DPIA) for processing likely to result in high risk to rights and freedoms (Article 35). AI Act requires Article 9 risk management system for high-risk AI systems. When high-risk AI processes personal data: conduct both DPIA and risk management system. Use integrated approach addressing both frameworks in unified assessment rather than separate parallel assessments. Article 13 AI Act information to deployers provides input for GDPR Article 30 processing records and Article 35 DPIA.

Special category data handling. GDPR Article 9 generally prohibits processing special categories of personal data unless specific condition applies. AI Act Article 10(5) creates explicit exemption: providers may process special category data to the extent strictly necessary for bias monitoring, detection, and correction, subject to appropriate safeguards. This provides legal basis previously unclear under GDPR — you can process racial/ethnic data specifically to detect and mitigate bias in AI training data or model outputs. Document legal basis clearly: reference AI Act Article 10(5) as legal basis for bias detection processing.

Automated decision-making rights. GDPR Article 22 provides right not to be subject to solely automated decision-making producing legal effects or similarly significantly affecting the data subject. AI Act Article 14 requires human oversight for high-risk AI but doesn't prohibit automated decision-making. Integration approach: high-risk AI making decisions with legal/significant effects must satisfy BOTH Article 22 (right to human review) AND Article 14 (human oversight design).

Penalty comparison. GDPR maximum: €20 million or 4% global turnover. AI Act maximum: €35 million or 7% global turnover. AI Act penalties higher, but violations may trigger both frameworks simultaneously.

Incident reporting timelines. GDPR: personal data breach notification to supervisory authority within 72 hours (Article 33). AI Act: serious incident reporting within 2–15 days depending on severity (Article 73). When incident involves both personal data breach AND AI system serious incident, shortest timeline applies — use 72-hour GDPR timeline to ensure compliance with both.

NIST AI Risk Management Framework Mapping

Organizations seeking global compliance can map NIST AI RMF functions to EU AI Act requirements, creating unified governance satisfying both frameworks.

- GOVERN function (culture, policies, roles, accountability) maps to Article 17 Quality Management System and Article 4 AI literacy requirements. Use NIST GOVERN to design governance framework, then document per Article 17 requirements for EU compliance.

- MAP function (context, risk identification, categorization) maps to Article 6 risk classification and Article 9 risk management system. Extend NIST MAP to include explicit AI Act classification as required categorization dimension.

- MEASURE function (metrics, assessment, testing, validation) maps to Article 15 accuracy, robustness, and cybersecurity requirements with appropriate metrics and Article 10 data quality validation. Use NIST MEASURE to define appropriate metrics and thresholds specific to use case.

- MANAGE function (treatment, monitoring, response) maps to Article 72 post-market monitoring, Article 20 corrective actions, and Article 73 serious incident reporting. Use NIST MANAGE practices as implementation approach for Articles 20, 72, 73.

Recommended approach. Use NIST AI RMF as foundational risk management framework providing structure and best practices. Layer EU AI Act prescriptive requirements on top of NIST foundation addressing specific legal obligations. Create unified documentation satisfying both frameworks — NIST RMF profile documents choices and approaches, AI Act technical documentation references RMF profile demonstrating compliance.

ISO/IEC 42001 Integration

ISO/IEC 42001:2023 (Artificial Intelligence Management System) provides certifiable framework addressing approximately 40–50% of high-level EU AI Act requirements. Organizations should strongly consider ISO 42001 certification as foundation for compliance.

| AI Act Requirement | ISO 42001 Clause | Relationship |

|---|---|---|

| Article 9 Risk Management | Clause 8.2 AI risk assessment and treatment | ISO 42001 provides management system framework; AI Act specifies legal obligations |

| Article 10 Data Governance | Annex A data management controls | ISO 42001 provides control framework; AI Act specifies legal requirements |

| Articles 11/18 Documentation | Clause 7.5 documented information | ISO 42001 establishes documentation management; AI Act specifies required content (Annex IV) |

| Article 14 Human Oversight | Transparency and human oversight controls | ISO 42001 provides principles; AI Act creates legal obligations |

| Article 17 QMS | Clauses 4–10 complete management system | ISO 42001 QMS directly satisfies Article 17 requirements |

| Article 72 Post-Market Monitoring | Clause 9 monitoring, measurement, analysis | ISO 42001 monitoring framework operationalizes Article 72 obligations |

Certification benefits for EU AI Act compliance:

- Demonstrable governance maturity. ISO 42001 certification provides third-party validation of AI management system maturity, demonstrating to authorities your organization takes AI governance seriously.

- Reduced conformity assessment burden. Notified Bodies conducting Article conformity assessment review QMS documentation. ISO 42001 certification reduces review burden — certified QMS requires less extensive examination than undocumented processes.

- Foundation for Article 6(3) derogation. ISO 42001-certified organizations have governance framework supporting credible derogation determinations.

- Unified global compliance. ISO 42001 gains traction internationally as AI governance standard. Single certification supports EU AI Act, voluntary frameworks in other jurisdictions, and increasingly customer/partner requirements.

- Continuous improvement culture. ISO standards require continual improvement (Clause 10), aligning with the AI Act's lifecycle approach.

Recommended approach. Organizations serious about AI governance should pursue ISO 42001 certification as strategic compliance foundation. Timeline: begin implementation Q2–Q3 2025 targeting certification by Q1–Q2 2026, providing certified QMS before August 2026 high-risk enforcement. Cost: certification adds €30,000–€80,000 to compliance costs but creates substantial value beyond AI Act.

Strategic Recommendations and Implementation Best Practices

Based on detailed analysis of requirements, costs, and timelines, organizations should prioritize three strategic imperatives for 2025–2026 compliance.

Priority 1: Complete AI System Inventory Immediately

The February 2025 prohibited practices deadline has passed. Organizations cannot claim ignorance of systems violating Article 5. Immediate comprehensive inventory is non-negotiable.

Inventory methodology. Survey all business units, departments, and subsidiaries identifying AI systems in development, testing, or production. Cast wide net initially — include systems you suspect might not be AI to ensure comprehensive coverage. Better to evaluate and exclude than miss systems.

Information to collect for each system:

- System name and description

- Business purpose and use case

- Deployment status (development/testing/production)

- Technical approach (machine learning type, algorithms)

- Data sources and types processed

- Affected stakeholders (employees, customers, public)

- Geographic scope (where deployed, where impacts persons)

- Provider information (internal development vs. external vendor)

- Deployment timeline (when launched or planned launch)

Risk classification determination. For each identified system, work through risk classification decision tree systematically. Document classification determination and reasoning. Flag prohibited systems requiring immediate decommissioning. Identify high-risk systems requiring full compliance by August 2026. Categorize limited-risk systems requiring transparency measures. Note minimal-risk systems for completeness.

Inventory maintenance. AI system inventory is a living document requiring ongoing maintenance. Establish process for updating when new systems deploy, existing systems undergo major modifications, systems are decommissioned, or use cases expand into new domains.

Immediate actions based on inventory: Decommission prohibited systems immediately — no exceptions, no gradual phaseout, no waiting for deadline. For high-risk systems: prioritize by deployment date, business criticality, and regulatory risk. For limited-risk systems: implement transparency measures. For minimal-risk systems: document classification reasoning.

Priority 2: Establish Documentation Infrastructure Before Individual System Compliance

Organizations without existing Quality Management System face setup costs of €193,000–€330,000, but this investment applies to all current and future high-risk systems. Prioritize infrastructure over individual systems to create efficiency gains.

QMS implementation approach. Evaluate whether to build from scratch versus extend existing quality management systems. Organizations with ISO 9001, ISO 13485 (medical devices), AS9100 (aerospace), or IATF 16949 (automotive) can adapt existing QMS to incorporate AI Act requirements, reducing costs by 40–60%. Consider ISO 42001 certification as QMS foundation.

Core QMS components for AI Act compliance:

- AI governance policy establishing organizational commitment and governance structure

- Documented procedures for risk management (Article 9), data governance (Article 10), human oversight (Article 14), accuracy/robustness/cybersecurity validation (Article 15), technical documentation preparation (Articles 11/18), conformity assessment management, post-market monitoring (Article 72), serious incident reporting (Article 73), and corrective action management (Article 20)

- Role definitions: AI governance committee or board, risk management owners, data governance leads, technical documentation authors, conformity assessment coordinators, post-market monitoring analysts, and incident response personnel

- Training programs addressing Article 4 AI literacy for all personnel plus specialized training for roles with AI responsibilities

- Documentation management infrastructure: central repository, version control, access control, retention policies ensuring 10-year retention, and templates standardizing documentation across systems

Efficiency multiplier. First high-risk system incurs full QMS setup costs plus system-specific documentation. Subsequent systems incur only system-specific documentation costs leveraging existing QMS infrastructure. For organization with 10 high-risk systems: QMS approach costs €193K + (10 × €30K) = €493K total. Without QMS, systems require custom governance per system costing significantly more with higher authority scrutiny.

Priority 3: Leverage Regulatory Framework Strategically

The AI Act includes accommodations and strategic opportunities organizations should exploit rather than treating regulation purely as compliance burden.

SME provisions and benefits. Small and Medium-sized Enterprises receive specific accommodations: penalty cap at lower of fixed amount or turnover percentage, reduced conformity assessment fees (implementing acts expected 2025), simplified documentation templates (expected in Commission guidelines), priority access to regulatory sandboxes, and proposed 12-month extension for companies <50 employees (Digital Omnibus). SMEs should actively claim these benefits rather than assuming they automatically apply.

Regulatory sandbox access. Article 57 requires each Member State to establish at least one AI regulatory sandbox — controlled environment for developing and testing AI under regulatory supervision. Sandboxes provide: guidance from national authority during development, ability to test high-risk AI before full compliance, reduced liability during testing period, and regulatory learning informing guidance development. Apply early — sandbox capacity is limited.

Harmonized standards as safe harbor. Once harmonized standards are published in Official Journal (expected throughout 2025–2026), compliance with standards creates presumption of conformity with corresponding AI Act requirements. Monitor European standardization requests and CEN-CENELEC AI committee work. Plan to adopt harmonized standards as soon as available, enabling internal control conformity assessment pathway.

ISO 42001 certification positioning. ISO 42001 certification provides competitive differentiation demonstrating AI governance maturity to customers, partners, and authorities. Marketing value beyond compliance — certified organizations can promote trustworthy AI governance as differentiator. Earlier certification (2025 vs. 2026) creates first-mover advantage.

Global harmonization opportunities. Organizations operating globally should pursue unified compliance: use NIST AI RMF as foundation satisfying voluntary US framework, layer ISO 42001 providing international certification, add EU AI Act legal requirements for European market, and monitor developments in UK, Singapore, China, and other jurisdictions for emerging requirements. Single governance framework with jurisdiction-specific overlays is more efficient than fragmented regional approaches.

Strategic Business Benefits Beyond Compliance

Organizations viewing AI Act purely as cost burden miss strategic opportunities. Benefits include:

- Reduced AI incidents and associated costs (reputational damage, litigation, customer losses)

- Enhanced customer trust enabling AI adoption in risk-sensitive sectors

- Competitive advantage over less compliant competitors

- Reduced technical debt from disciplined development practices

- Improved AI system quality from rigorous testing and monitoring

The August 2026 deadline leaves approximately 18 months from publication of this guide for organizations to complete comprehensive compliance programs. This timeline is adequate for well-resourced organizations with leadership commitment and clear prioritization — but requires immediate action.

Conclusion: The Path Forward

The European Union AI Act represents the most significant development in technology regulation since GDPR. Unlike GDPR's focus on personal data protection, the AI Act addresses broader concerns: human safety, fundamental rights protection, democratic values preservation, and prevention of technological systems that threaten human dignity and autonomy.

The regulation is now law. Prohibited practices are enforceable. The governance architecture is operational. The August 2026 deadline approaches rapidly. Organizations can no longer afford to wait for perfect clarity — act on current understanding while monitoring guidance development.

Three Core Principles

Principle 1: Start with prohibited practices audit. Highest penalty tier (€35M/7% turnover) combined with immediate enforceability creates acute risk. Comprehensive prohibited practices audit is prerequisite for all other compliance activity. If you're operating prohibited systems, compliance costs become irrelevant — you must decommission. Don't assume your systems are safe. Work through Article 5 systematically with technical personnel who understand actual system operation, not just business descriptions.

Principle 2: Invest in infrastructure, not just individual systems. Quality Management Systems, technical documentation frameworks, post-market monitoring infrastructure, and governance committees create leverage across multiple AI systems. Organizations with 5+ high-risk systems achieve rapid ROI on infrastructure investment. Organizations with smaller portfolios should consider whether selective system decommissioning is more economical than full compliance infrastructure for marginal applications.

Principle 3: Integrate rather than isolate. The AI Act intersects with GDPR, sector-specific regulations, international frameworks, and voluntary standards. Organizations maintaining separate parallel compliance programs for each framework waste resources and create inconsistencies authorities will identify. Build unified governance satisfying multiple frameworks simultaneously through strategic integration.